Read Preference Reference¶

Read preference describes how MongoDB clients route read operations to the members of a replica set.

By default, an application directs its read operations to the primary member in a replica set.

| Read Preference Mode | Description |

|---|---|

| primary | Default mode. All operations read from the current replica set primary. |

| primaryPreferred | In most situations, operations read from the primary but if it is unavailable, operations read from secondary members. |

| secondary | All operations read from the secondary members of the replica set. |

| secondaryPreferred | In most situations, operations read from secondary members but if no secondary members are available, operations read from the primary. |

| nearest | Operations read from member of the replica set with the least network latency, irrespective of the member’s type. |

注解

The read preference does not affect the visibility of data; i.e, clients can see the results of writes before they are made durable:

- Regardless of write concern, other clients using "local" (i.e. the default) readConcern can see the result of a write operation before the write operation is acknowledged to the issuing client.

- Clients using "local" (i.e. the default) readConcern can read data which may be subsequently rolled back.

For more information on read isolation level in MongoDB, see 读隔离、一致性和时近性.

Read Preference Modes¶

- primary¶

All read operations use only the current replica set primary. [6] This is the default read mode. If the primary is unavailable, read operations produce an error or throw an exception.

The primary read preference mode is not compatible with read preference modes that use tag sets. If you specify a tag set with primary, the driver will produce an error.

- primaryPreferred¶

In most situations, operations read from the primary member of the set. However, if the primary is unavailable, as is the case during failover situations, operations read from secondary members.

When the read preference includes a tag set, the client reads first from the primary, if available, and then from secondaries that match the specified tags. If no secondaries have matching tags, the read operation produces an error.

Since the application may receive data from a secondary, read operations using the primaryPreferred mode may return stale data in some situations.

- secondary¶

Operations read only from the secondary members of the set. If no secondaries are available, then this read operation produces an error or exception.

Most sets have at least one secondary, but there are situations where there may be no available secondary. For example, a set with a primary, a secondary, and an arbiter may not have any secondaries if a member is in recovering state or unavailable.

When the read preference includes a tag set, the client attempts to find secondary members that match the specified tag set and directs reads to a random secondary from among the nearest group. If no secondaries have matching tags, the read operation produces an error. [2]

Read operations using the secondary mode may return stale data.

- secondaryPreferred¶

In most situations, operations read from secondary members, but in situations where the set consists of a single primary (and no other members), the read operation will use the set’s primary.

When the read preference includes a tag set, the client attempts to find a secondary member that matches the specified tag set and directs reads to a random secondary from among the nearest group. If no secondaries have matching tags, the client ignores tags and reads from the primary.

Read operations using the secondaryPreferred mode may return stale data.

- nearest¶

The driver reads from the nearest member of the set according to the member selection process. Reads in the nearest mode do not consider the member’s type. Reads in nearest mode may read from both primaries and secondaries.

Set this mode to minimize the effect of network latency on read operations without preference for current or stale data.

If you specify a tag set, the client attempts to find a replica set member that matches the specified tag set and directs reads to an arbitrary member from among the nearest group.

Read operations using the nearest mode may return stale data.

注解

All operations read from a member of the nearest group of the replica set that matches the specified read preference mode. The nearest mode prefers low latency reads over a member’s primary or secondary status.

For nearest, the client assembles a list of acceptable hosts based on tag set and then narrows that list to the host with the shortest ping time and all other members of the set that are within the “local threshold,” or acceptable latency. See Read Preference for Replica Sets for more information.

Use Cases¶

Depending on the requirements of an application, you can configure different applications to use different read preferences, or use different read preferences for different queries in the same application. Consider the following applications for different read preference strategies.

Maximize Consistency¶

To avoid stale reads, use primary read preference and "majority" readConcern. If the primary is unavailable, e.g. during elections or when a majority of the replica set is not accessible, read operations using primary read preference produce an error or throw an exception.

In some circumstances, it may be possible for a replica set to temporarily have two primaries; however, only one primary will be capable of confirming writes with the "majority" write concern.

- A partial network partition may segregate a primary (pold) into a partition with a minority of the nodes, while the other side of the partition contains a majority of nodes. The partition with the majority will elect a new primary (Pnew), but for a brief period, the old primary (pold) may still continue to serve reads and writes, as it has not yet detected that it can only see a minority of nodes in the replica set. During this period, if the old primary (pold) is still visible to clients as a primary, reads from this primary may reflect stale data.

- A primary (pold) may become unresponsive, which will trigger an election and a new primary (Pnew) can be elected, serving reads and writes. If the unresponsive primary (pold) starts responding again, two primaries will be visible for a brief period. The brief period will end when pold steps down. However, during the brief period, clients might read from the old primary pold, which can provide stale data.

To increase consistency, you can disable automatic failover; however, disabling automatic failover sacrifices availability.

Maximize Availability¶

To permit read operations when possible, use primaryPreferred. When there’s a primary you will get consistent reads [6], but if there is no primary you can still query secondaries. However, when using this read mode, consider the situation described in secondary vs secondaryPreferred.

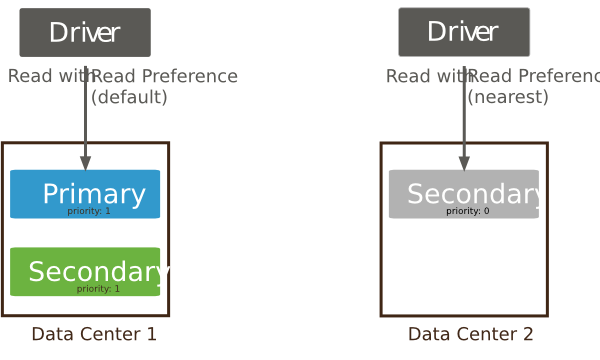

Minimize Latency¶

To always read from a low-latency node, use nearest. The driver or mongos will read from the nearest member and those no more than 15 milliseconds [3] further away than the nearest member.

nearest does not guarantee consistency. If the nearest member to your application server is a secondary with some replication lag, queries could return stale data. nearest only reflects network distance and does not reflect I/O or CPU load.

Query From Geographically Distributed Members¶

If the members of a replica set are geographically distributed, you can create replica tags based that reflect the location of the instance and then configure your application to query the members nearby.

For example, if members in “east” and “west” data centers are tagged {'dc': 'east'} and {'dc': 'west'}, your application servers in the east data center can read from nearby members with the following read preference:

db.collection.find().readPref( { mode: 'nearest',

tags: [ {'dc': 'east'} ] } )

Although nearest already favors members with low network latency, including the tag makes the choice more predictable.

secondary vs secondaryPreferred¶

For specific dedicated queries (e.g. ETL, reporting), you may shift the read load from the primary by using the secondary read preference mode. For this use case, the secondary mode is preferable to the secondaryPreferred mode because secondaryPreferred risks the following situation: if all secondaries are unavailable and your replica set has enough arbiters [1] to prevent the primary from stepping down, then the primary will receive all traffic from the clients. If the primary is unable to handle this load, the queries will compete with the writes. For this reason, use read preference secondary to distribute these specific dedicated queries instead of secondaryPreferred.

| [1] | In general, avoid deploying more than one arbiter per replica set. |

Read Preferences for Database Commands¶

Because some database commands read and return data from the database, all of the official drivers support full read preference mode semantics for the following commands:

- group

- mapReduce [4]

- aggregate [5]

- collStats

- dbStats

- count

- distinct

- geoNear

- geoSearch

- parallelCollectionScan

2.4 新版功能: mongos adds support for routing commands to shards using read preferences. Previously mongos sent all commands to shards’ primaries.

| [2] | If your set has more than one secondary, and you use the secondary read preference mode, consider the following effect. If you have a three member replica set with a primary and two secondaries, and one secondary becomes unavailable, all secondary queries must target the remaining secondary. This will double the load on this secondary. Plan and provide capacity to support this as needed. |

| [3] | This threshold is configurable. See localPingThresholdMs for mongos or your driver documentation for the appropriate setting. |

| [4] | Only “inline” mapReduce operations that do not write data support read preference, otherwise these operations must run on the primary members. |

| [5] | Using the $out pipeline operator forces the aggregation pipeline to run on the primary. |

| [6] | (1, 2) In some circumstances, two nodes in a replica set may transiently believe that they are the primary, but at most, one of them will be able to complete writes with { w: "majority" } write concern. The node that can complete { w: "majority" } writes is the current primary, and the other node is a former primary that has not yet recognized its demotion, typically due to a network partition. When this occurs, clients that connect to the former primary may observe stale data despite having requested read preference primary, and new writes to the former primary will eventually roll back. |